Git Reference

creating:init, branch

adding and removing files::add, rm

seeing activity:log, status

basic repository operations are: push, pull, commit, checkout, clone, fetch, merge

undoing changes: reset, checkout, revert

see files in the repo:ls-files

finding stuff:grep

labeling:tag

Some git cheat sheets:

http://help.github.com/git-cheat-sheets/

http://cheat.errtheblog.com/s/git

How do I see the differences between file versions?

git diff <commit hash> <filename>

How do I go back a to version x?

Then to revert a specific file to that commit use the reset command:

git reset <commit hash> <filename>

You may need to use the --hard option if you have local modifications.

A good workflow for managaging waypoints is to use tags to cleanly mark points in your timeline. I can't quite understand your last sentence but what you may want is diverge a branch from a previous point in time. To do this, use the handy checkout command:

git checkout <commit hash>

git checkout -b <new branch name>

You can then rebase that against your mainline when you are ready to merge those changes:

git checkout <my branch>

git rebase master

git checkout master

git merge <my branch>

How do I find/look at a files history (log)?

git log <filename>

http://book.git-scm.com/3_reviewing_history_-_git_log.html

Using --stat with log will show what files changed and by how much.

git log --stat

There is also a --pretty option that provides several nicer ways of presenting the results

git log --pretty=oneline

git log --pretty=short

git log --pretty=format:'%h was %an, %ar, message: %s'

You can also use 'medium', 'full', 'fuller', 'email' or 'raw'. If those formats aren't exactly what you need, you can also create your own format with the '--pretty=format' option (see the git log docs for all the formatting options).

How do I roll back/throw away current changes?

Use checkout if you haven’t committed yet.

$ git checkout -- hello.rb

$ git checkout HEAD hello.rb

http://book.git-scm.com/4_undoing_in_git_-_reset,_checkout_and_revert.html

Use revert to fix committed mistakes.

You have to be careful when you say "rollback". If you used to have one version of a file in commit $A, and then later made two changes in two separate commits $B and $C (so what you are seeing is the third iteration of the file), and if you say "I want to roll back to the first one", do you really mean it?

If you want to get rid of the changes both the second and the third iteration, it is very simple:

$ git checkout $A file

and then you commit the result. The command asks "I want to check out the file from the state recorded by the commit $A".

On the other hand, what you meant is to get rid of the change the second iteration (i.e. commit $B) brought in, while keeping what commit $C did to the file, you would want to revert $B

$ git revert $B

Note that whoever created commit $B may not have been very disciplined and may have committed totally unrelated change in the same commit, and this revert may touch files other than file you see offending changes, so you may want to check the result carefully after doing so.

Disclaimer: I created the layout of this document, along with the questions I wanted answered for myself. The answers are gleaned and lightly edited from results I found during the research process. Quite a few answers were found on stackoverflow.com

Python Coding Standard, Metrics and Test Coverage

Motivation

My motivation in seeking a coding standard, static code metrics analyzer and test coverage tool is multifaceted. I want to know that my Python code is formatted in a way that is accepted by the community. I want to be able to quickly check the cyclomatic complexity of code. It is my intent to test drive my code. Therefore, I wanted a tool which could show me and others the level of code coverage and any areas that need to be brought under test.

Note that the preferred download for all three of these tools is a .tar.gz format file. On a Windows system you’ll need a tool like 7-zip. All of this guidance is intended for use with Python 2.7 and PyCharm 1.5.4. You need to add C:\Python27\ to your PATH environment variable in order to successfully install these tools.

PEP8

PEP8 is a tool that provides guidance that you are following proper Python coding formatting. Download from here: http://pypi.python.org/pypi/pep8

Extract the PEP8 folder. Using a command prompt change to the extracted PEP8 folder directory. Run: python setup.py install There should now be a pep8.exe and pep8-script.py in your python installations scripts directory. You can now delete the extracted PEP8 folder.

PEP8 with PyCharm

From http://www.in-nomine.org/2010/12/14/pycharm-and-external-lint-tools/

PyCharm already has a number of features present in various tools to lint/check your source code with, but offers a way to hook up external tools. Under File > Settings is a section called IDE Settings. One of the headings here is called External Tools. Select this heading and then press the Add... button on the right hand pane to configure a new external tool.

In the Edit Tool window that now appeared fill in a name, e.g. PEP8 and a group name Lint and add a description. Next point the Program to the location of the pep8.exe executable, e.g. C:\Python27\Scripts\pep8.exe. ForParameters you need to use $FilePath$ and Working directory should be same as the Python scripts directory. Once done, you can close it by pressing the OK button.

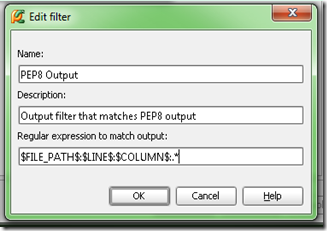

Now add a filter to the external tool to get click-and-go-to behavior

See http://www.jetbrains.com/pycharm/webhelp/add-filter-dialog.html for how to add filters.

use this for the spec: $FILE_PATH$:$LINE$:$COLUMN$:.*

Select a file either in the navigator or editor panes.

Then from menu can go to Tools > Lint > PEP8

You'll also have links you can click on for the PEP8 output.

Following my initial installation notes on a second machine I was getting a urllib.parse error from PEP8.exe, "no module named parse" Seemed like this problem is related to distribute. Pulled down the latest from http://pypi.python.org/pypi/distribute Nope, wouldn't install. Looking like issue with C:\python27\lib\urllib2.py. Web pointed me to reinstall setuptools http://pypi.python.org/pypi/setuptools Installed that… tried to install distribute again… still no go… X| Ended up doing a python setup.py install to get distribute installed. Now, PEP8 works!

PyMetrics

PyMetrics is a tool for doing static code analysis. Download it here: https://github.com/ipmb/PyMetrics/downloads There is a tar.gz on SourceForge. However, the pymetrics runner does not have the .py extension which causes problems with extraction on a Windows System.

Extract your downloaded file. Change to that directory and run python setup.py install You can now delete the extracted folder. Now if you look in your Python scripts directory you'll find a pymetrics.py

Set up as an external tool in PyCharm as with PEP8.

The --nosql and --nocsv options tell the tool to not generate associated SQL insert code and suppresses the generation of a related CSV file.

Sample output from DarkMatterLogger.py

An earlier version of the DarkMatterLogger.py that was analyzed can be found here: https://gist.github.com/1218497

C:\Python27\python.exe C:\Python27\Scripts\pymetrics C:\macts\source\spikes\DarkMatterLogger.py

=== File: C:\macts\source\spikes\DarkMatterLogger.py ===

Module C:\macts\source\spikes\DarkMatterLogger.py is missing a module doc string. Detected at line 1

Basic Metrics for module C:\macts\source\spikes\DarkMatterLogger.py

-------------------------------------------------------------- 4 maxBlockDepth

12 numBlocks

3726 numCharacters

2 numClasses

15 numComments

5 numFunctions

24 numKeywords

104 numLines

668 numTokens

14.42 %Comments

Functions DocString present(+) or missing(-)

--------------------------------------------- DarkMatterLogger.__init__

- DarkMatterLogger.sendMessage

- DarkMatterViewer.__init__

- DarkMatterViewer.__init__.msg_consumer

- main

Classes DocString present(+) or missing(-)

------------------------------------------

- DarkMatterLogger

- DarkMatterViewer

McCabe Complexity Metric for file C:\macts\source\spikes\DarkMatterLogger.py

-------------------------------------------------------------- 1 DarkMatterLogger.__init__

1 DarkMatterLogger.sendMessage

1 DarkMatterViewer.__init__

2 DarkMatterViewer.__init__.msg_consumer

1 __main__

4 main

COCOMO 2's SLOC Metric for C:\macts\source\spikes\DarkMatterLogger.py

-------------------------------------------------------------- 55 C:\macts\source\spikes\DarkMatterLogger.py

*** Processed 1 module in run ***

Process finished with exit code 0

Coverage.py

Coverage.py is a tool for doing code coverage analysis. Download it here: http://pypi.python.org/pypi/coverage If you have a 64bit installation you’ll want to make sure you use the .tar.gz and not succumb to using a prepackaged exe.

Downloaded the coverage-3.5.1.tar.gz version. Extract folder from gzipped tar file. Chang to directory of download and: python setup.py install Now if look in c:\python27\lib\site-packages will see coverage-3.5.1-py2.7.egg Look in the scripts dir and a coverage.exe and coverage-script.py will be seen.

Gather metrics on your code with: coverage run class.py

Then get the report with: coverage report -m

The -m says show the line #s of statements that were not executed. Use coverage erase to get rid of previously run data. During my experimenting every run would get rid of previous data.

Set up as an external tool in PyCharm like other tools. Except had to do one for the run and another for the report. Others are integrating into their environment using nose.

Name: Coverage

For program: C:\Python27\Scripts\coverage.exe

For parameters: run $FileName$

Working directory: $FileDir$

Name: Coverage Report

For program: C:\Python27\Scripts\coverage.exe

For parameters: report -m

Working directory: $FileDir$

Coverage Sample Output

C:\Python27\Scripts\coverage.exe run C:\macts\source\spikes\ArgumentsTests.py

...........

----------------------------------------------------------------------

Ran 11 tests in 0.004s

OK

Process finished with exit code 0

Coverage Report Sample Output

C:\Python27\Scripts\coverage.exe report -m

Name Stmts Miss Cover Missing

----------------------------------------------

arguments 33 0 100%

argumentstests 45 0 100%

----------------------------------------------

TOTAL 78 0 100%

Process finished with exit code 0

Summary

By integrating these three tools into your development process you’ll increase the community acceptance of the code you produce as well as increase the quality of the code you produce.

Getting the Enron mail database into MongoDB

Background

In order to get some experience working with Python and MongoDB I decided I would like to find a data source with a lot of free form text. This would give me experience in pulling the data into MongoDB and at a future date I’ll have a ready data source for use with learning NLTK.Finding a large dataset that met my needs turned out to be harder than expected. I came across Hilary Mason’s link page of research-quality data sets (now a dead link, see my collection which includes as much of Hilary's as we could recover.) and found the Enron email dataset. This data set contains over 500K emails. The emails are in individual files stored in a directory structure. To me, the first step in being able to use the data is to get it into a database where I could query it.

The environment

The code was developed on an Intel Core-I7 machine with 6G of RAM and a 5400RPM hard disk. This code is I/O intensive and could have benefitted from a faster hard disk or an SSD. The host OS was Ubuntu 11.04 64bit. The tools used were Python 2.7 with pymongo and MongoDB.The code

How to query

You can use the mongo shell to do some queries once you have loaded the data.“use the enron_mail” database and you can do the following:

db.messages.find({ contents : /query text/i }).limit(1).skip(0);

Besides content, the document structure also includes: mailbox, subFolder and filename.

Here are some additional links with material on the shell and how to query:

http://www.mongodb.org/display/DOCS/Overview+-+The+MongoDB+Interactive+Shell http://www.mongodb.org/display/DOCS/Tutorial http://www.mongodb.org/display/DOCS/Querying http://www.mongodb.org/display/DOCS/Advanced+Queries

Analysis

Important things to note about the code:Here are some references on unicode:

http://docs.python.org/howto/unicode.html http://stackoverflow.com/questions/4685568/importing-file-with-unknown-encoding-from-python-into-mongodb

The full run took ~21 minutes after an initial run that probably had a bunch of files in cache. The run maxed out a single core of the CPU. The process was i/o bound with the hard drive. The full 6G of RAM was in use on the machine

My query of MonogoDB says I have 517,424 emails in the document store. It shouldn’t be too difficult to modify the code to work with your database of choice.

I hope you find this code useful and that it enables you to do some analysis with this dataset.